Implementing AI Agent Workflow Versioning and Rollback Strategies in Production

Learn how to implement robust versioning and rollback strategies for AI agent workflows in production environments. Drawing from real-world deployments on Google Cloud, this guide covers practical approaches to managing workflow iterations, handling version conflicts, and ensuring zero-downtime rollbacks.

Brandon Lincoln Hendricks

Autonomous AI Agent Architect

The Hidden Complexity of AI Agent Evolution

After shipping our fifth major workflow update last quarter, our AI agent system crashed spectacularly. Not because the new Gemini 2.0 Ultra integration failed. Not because our vector embeddings corrupted. The workflow logic itself, the intricate decision trees and tool orchestrations we'd carefully crafted, contained a subtle incompatibility that only surfaced under specific conditions.

This is the reality of production AI agent systems: the workflows that govern agent behavior are as critical as the models themselves, and they need robust versioning and rollback strategies.

What Makes AI Agent Workflow Versioning Different?

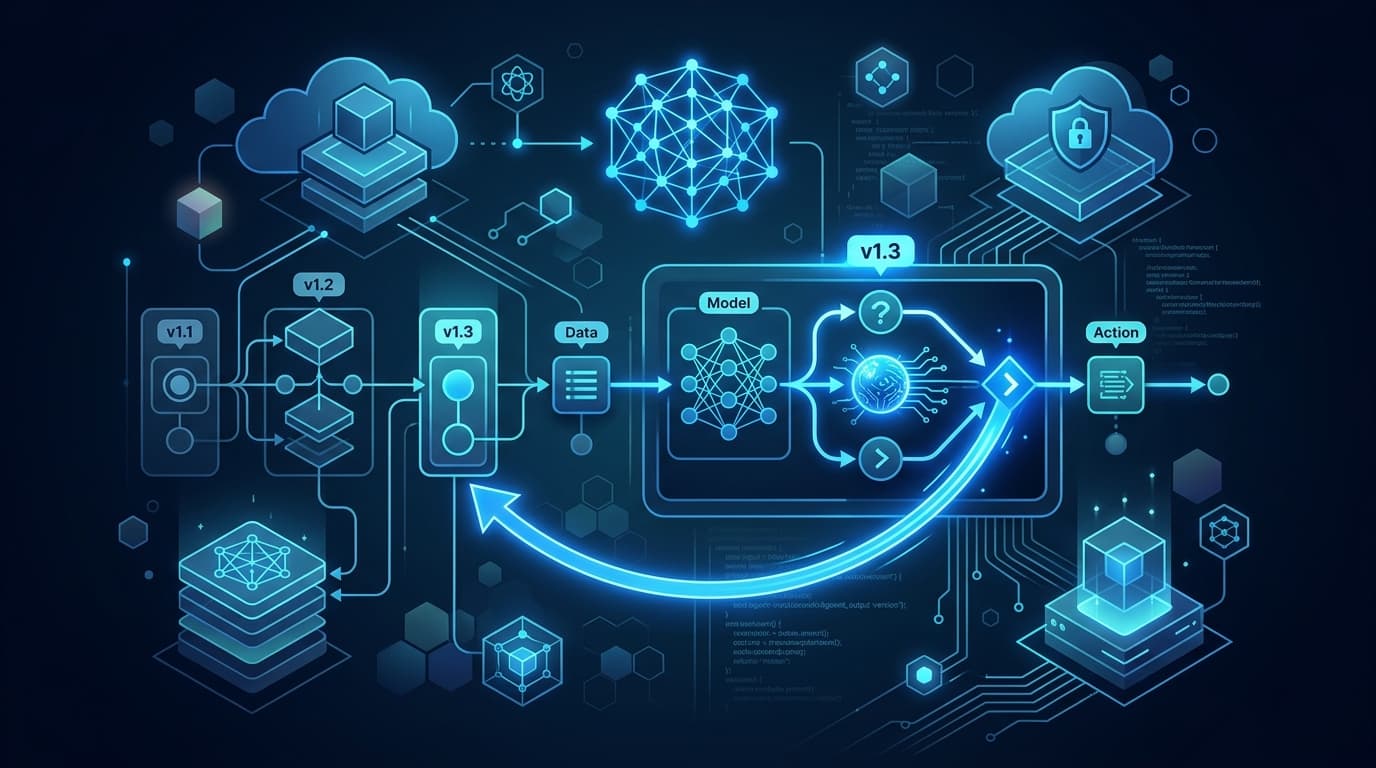

AI agent workflow versioning extends beyond traditional software versioning because workflows encompass multiple interdependent components. A single workflow version includes prompt templates, decision tree logic, tool call sequences, error handling strategies, and state management rules. When I implement versioning for production agents on Google Cloud, each workflow version becomes an immutable package stored in Cloud Storage with comprehensive metadata tracked in BigQuery.

The complexity multiplies when you consider that workflows often reference multiple AI models. A customer service agent workflow might use Gemini Pro for initial query understanding, Gemini Flash for quick lookups, and specialized fine-tuned models for domain-specific tasks. Each workflow version must specify exact model versions and gracefully handle model unavailability.

Architecting Version Management on Google Cloud

Here's the versioning architecture I've refined across multiple production deployments:

Core Storage Layer

Workflow definitions live in Cloud Storage buckets with strict versioning enabled. Each workflow gets stored as a JSON or YAML file with a structure like:

- ●Workflow ID and version number

- ●Timestamp and author metadata

- ●Complete prompt templates

- ●Decision tree definitions

- ●Tool integration configurations

- ●Model version requirements

- ●Rollback instructions

BigQuery serves as the metadata warehouse, tracking performance metrics, deployment history, and version relationships. This enables complex queries like "find all workflows using Gemini Pro 1.5 that showed performance degradation after April 1st."

Version Creation Pipeline

Cloud Build automates the version creation process. When developers push workflow changes to Cloud Source Repositories, the pipeline:

- ●Validates workflow syntax and structure

- ●Runs compatibility checks against current production versions

- ●Executes test suites specific to that workflow

- ●Generates version artifacts and stores them in Cloud Storage

- ●Updates BigQuery metadata tables

- ●Creates Cloud Monitoring dashboards for the new version

Deployment Orchestration

Vertex AI Agent Engine handles the actual agent runtime, but Traffic Director manages version routing. This separation allows sophisticated deployment patterns:

Canary Deployments: New workflow versions initially handle 5% of traffic, automatically increasing based on success metrics.

Feature-Flag Routing: Specific customers or request types route to new versions while others remain on stable versions.

Shadow Testing: New versions process requests in parallel without affecting actual responses, allowing performance comparison.

How Do You Implement Safe Rollback Strategies?

Rollback capability determines whether you can innovate fearlessly or live in constant fear of breaking production. The rollback system I've developed uses multiple defensive layers.

Instant Rollback Architecture

Traffic Director maintains active connections to multiple workflow versions simultaneously. When a rollback triggers, it simply adjusts routing rules rather than redeploying code. This achieves rollback times under 10 seconds for most scenarios.

Cloud Armor rules provide an additional safety net, allowing request filtering based on headers that specify preferred workflow versions. This helps when specific API clients need to pin to particular versions during their own deployment cycles.

State Management During Rollbacks

The trickiest part of rollbacks involves in-flight requests and stateful conversations. Firestore maintains conversation state with clear version tagging. When rolling back, the system:

- ●Identifies active conversations using the affected version

- ●Attempts graceful migration to the rollback version

- ●Falls back to conversation restart if migration fails

- ●Logs all affected sessions for later analysis

Automated Rollback Triggers

Cloud Monitoring custom metrics track workflow-specific health indicators:

- ●Task completion rate below 85%

- ●Average response time exceeding 2x baseline

- ●Error rate above 5% for any critical path

- ●Token usage exceeding 150% of projections

When thresholds breach, Cloud Functions automatically initiate rollbacks and page on-call engineers.

Managing Complex Version Dependencies

Production AI agents rarely operate in isolation. They integrate with external APIs, reference shared knowledge bases, and coordinate with other agents. Version management must account for these dependencies.

Dependency Mapping

Each workflow version includes a dependency manifest:

- ●Required Gemini model versions

- ●Vertex AI Search datastore versions

- ●External API versions and endpoints

- ●Shared prompt library versions

- ●Knowledge base snapshot IDs

BigQuery analyzes these dependencies to prevent incompatible deployments. If a new workflow version requires Gemini Pro 2.0 but production only has 1.5, deployment blocks automatically.

Cross-Version Communication

When multiple workflow versions run simultaneously, they must communicate seamlessly. I implement versioned message formats using Protocol Buffers, with Cloud Pub/Sub handling inter-version messaging. Older versions include adapters to handle newer message formats, ensuring backward compatibility.

What Testing Strategies Ensure Version Stability?

Testing AI agent workflows requires different approaches than traditional software testing. Deterministic unit tests help but don't capture the full behavioral complexity.

Behavioral Testing Framework

Each workflow version maintains a golden dataset of conversations in BigQuery. These represent expected agent behavior across various scenarios. New versions must produce equivalent or improved outcomes for these conversations.

The testing pipeline uses Vertex AI to run hundreds of conversation variations, comparing:

- ●Task completion success rates

- ●Number of turns to resolution

- ●Tool call patterns

- ●Token efficiency

- ●Response quality metrics

Regression Detection

BigQuery ML models trained on historical performance data detect subtle regressions that might escape rule-based tests. These models identify unusual patterns in:

- ●Response time distributions

- ●Error clustering

- ●Token usage anomalies

- ●Tool call sequences

Production Shadowing

Before full deployment, new versions shadow production traffic for at least 48 hours. Cloud Logging captures both versions' outputs, with Cloud Functions comparing results and flagging divergences. This catches edge cases that synthetic tests miss.

Performance Implications of Version Management

Versioning systems can introduce latency and complexity. Here's how to minimize performance impact:

Caching Strategy

Cloud CDN caches compiled workflow definitions at edge locations. The system only fetches new versions when explicitly requested, eliminating lookup latency for stable versions.

Redis clusters cache frequently accessed version metadata, reducing BigQuery query load. Hot workflows remain memory-resident across agent instances.

Version Pruning

Old versions consume storage and complicate dependency analysis. Automated pruning policies remove versions that:

- ●Haven't handled traffic in 90 days

- ●Show inferior performance to newer versions

- ●Depend on deprecated model versions

- ●Lack active customer pins

Archived versions move to Coldline storage, remaining accessible for compliance but not actively loaded.

Real-World Lessons from Production Deployments

Three years of managing versioned AI agent workflows taught me several non-obvious lessons:

Version Explosion Is Real

Without discipline, workflow versions proliferate uncontrollably. One client accumulated 847 versions in six months, making management impossible. Now I enforce:

- ●Mandatory version consolidation reviews monthly

- ●Automatic minor version deprecation after 30 days

- ●Clear version numbering schemes (major.minor.patch)

- ●Required sunset dates for experimental versions

Rollback Isn't Always Backward

Sometimes the best "rollback" involves rolling forward to a new version that fixes issues while preserving new functionality. Maintain fix-forward procedures alongside traditional rollbacks.

Version Metrics Tell Stories

Analyzing version performance over time reveals optimization opportunities. One workflow showed 30% token reduction across versions as prompts refined. Another revealed increasing tool call rates, indicating growing agent sophistication.

Building Your Version Management System

If you're implementing version management for AI agent workflows, start with these foundations:

Define Version Boundaries: Clearly specify what constitutes a new version versus a configuration change. Include prompt modifications, tool additions, and flow logic changes.

Implement Comprehensive Logging: Every version interaction should log to Cloud Logging with structured metadata. You'll need this for debugging and analysis.

Design for Rollback from Day One: Retrofitting rollback capabilities is exponentially harder than building them initially. Include rollback hooks in your initial architecture.

Automate Everything: Manual version management doesn't scale. Use Cloud Build, Cloud Functions, and Cloud Scheduler to automate creation, testing, deployment, and cleanup.

Monitor Proactively: Set up Cloud Monitoring alerts before issues arise. Track version-specific metrics and establish baselines early.

The Future of AI Agent Versioning

As AI agents become more sophisticated, versioning strategies must evolve. I'm seeing emergence of:

Adaptive Versioning: Agents that modify their own workflows based on performance data, creating micro-versions automatically.

Federated Version Management: Multi-agent systems where each agent manages independent versions while maintaining system coherence.

Version Learning: ML models that predict version performance before deployment based on workflow characteristics.

The tools and patterns I've outlined here provide a foundation, but expect rapid evolution as the field matures.

Implementing robust versioning and rollback strategies isn't optional for production AI agents. It's the difference between confident iteration and production anxiety. Start with basic version tracking, add automated testing, implement safe rollbacks, and continuously refine based on operational experience. Your future self, faced with a critical production issue at 3 AM, will thank you for the investment.